Visibility mechanics

With Update 1.53 "Fire Storm" comes the move to Dagor Engine 4.0 along with significant changes to the game thanks to alterations in the visibility of ground vehicles and aircraft.

But let's begin from afar.

Contents

Part I. Visibility in the Air

How is visibility for an aircraft defined? One might often hear that “they’re more visible in real life” or “you can recognize an plane’s silhouette from X kilometers away” or “in the game Y is more/less visible, more/less realistic”.

It’s far easier to detect an aircraft by sound than by sight. On a windless day, in clear weather, the sound of the engine can be heard from 10-12 km away, while a single engine aircraft can only be seen without binoculars at a distance of 8-10 km.

View distance table

In defining the distance of an aircraft by eye, the following must be considered:

Notice the change of LoD = Level of Detail

| Human eye's visual resolution against the azure sky | ||

|---|---|---|

| Distance in meters | What can be seen with binoculars | What can be seen by the naked eye |

| · 8,000 - 10,000 | · Silhouettes in the form of blurry dots. | · Aircraft are either not visible, or visible as small black dots. |

| · 6,000 - 8,000 | · Aircraft silhouettes. Details not visible. | · Silhouettes in the form of dots. |

| · 5,000 - 6,000 | · Aircraft silhouettes. Details not visible | · Aircraft silhouettes. Details not visible. |

| · 4,000 |

|

· Aircraft silhouettes. Details not visible |

| · 3,000 |

|

|

| · 2,000 |

|

|

| · 1,000 |

|

|

| · 500 |

|

|

Human visual perception

In reality, to understand what is visible at a distance and how, we first need to understand how human vision works in general, how the images are rendered on the screen, and how to represent one and the other.

Humans have a viewing angle of roughly 160x130 degrees. Angular resolution: 1-2′ (roughly 0,02°-0,03°), which roughly corresponds to 30-60 cm at a distance of 1 km (it is very rough interpretation because eye is detecting light with exposure, and brain recreate picture by using ‘compressed’ data that changing in time).

With our peripheral vision, our sight is significantly worse, taking in mostly movement, and with a high degree of corruption. Right in front of ourselves, we see with a good resolution ability (if we accept that we have excellent eyesight) and with a viewing angle of ~40 degrees.

Apart from that, our pupils constantly move, “scanning” the entire field of view. This compensates for perceiving information in the so-called blind spot – an area where we don’t see anything at all. We don’t notice the blind spot, just like we don’t notice the corruption in our peripheral vision thanks to how our brain works – it constantly works on image recognition, reconstructing the colours of objects, their shape and their position. In close proximity and directly in front of you, thanks to the fact that you have two eyes, your brain gives you the size and position of the objects you’re looking at – that’s called a binocular vision or stereopsis. The theoretical limits of getting depth by eyes is about 1km, but in most cases it is much lower (about 100-300 meter or less). But if you close one eye, the world doesn’t change – doesn’t immediately become insufferably flat. This happens because of the way our brain works.

At the same time, at such a distance with the game camera, characters usually have sizes measured in few pixels.

Reference images for 2D illustration:

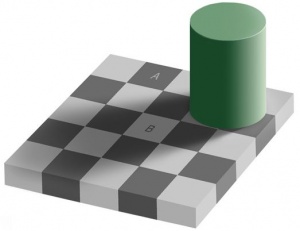

- Recognition – Illusions and Interpretation errors

- Contrast & Symmetry Illusions

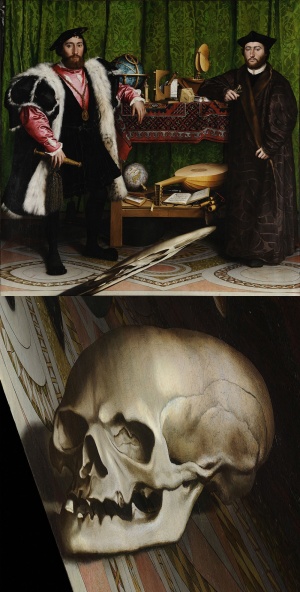

- Geometry 3D depth perception on 2D – Your monitor-screen is no more than a painter's canvas

Technically, the eye contains 120 million monochrome and 5 million colour receptors, but the flow of video information to the brain takes place with some losses. This is roughly equivalent to a video flow of 75 frames per second at 30 megapixels.

For comparison, the best monitors right now run at ~4096 × 3112 with 60 fps (and you’ll need very powerful hardware to run one) with a viewing angle of roughly 30 degrees (on a flat display), which, on the whole, is not inferior to the resolution of the human eye at the same viewing angle (without taking into account the luminance range), but the eye perceives an angle several times that, i.e. the monitor is just a “window” from our world into the world of virtual reality. The overwhelming majority of players play with a resolution of 1920x1080 or lower at 60-90 fps, which is magnitude worse than what our sharp eyes can perceive. Our “little window” into the virtual world is also, as a rule, a little murky and much more dim – the monitor has just 256 different monochrome brightness shades, while the real eye is capable of perceiving many times that.

But that’s not all. Our angle of vision on the screen is 30-45 degrees, and for natural perception, the game camera has to show 30-45 degrees too (this is also field of view of front vision). If the game camera shows a larger viewing angle, our brain will incorrectly reconstruct the positions and sizes of the objects – everything will seem small and distant, and the flawed resolution of our monitor will be even more noticeable.

Nonetheless, practically 100% of games have a game resolution in the region of around 90 degrees. This is something of a compromise between the ~130 degree view of the human eye and the 30-40 degree view at which a human will correctly perceive the size of an object.

The most noticeable thing that this compromise leads to is the corruption of perception of all sizes. For example, in the popular game Counter Strike, the size of the majority of maps does not exceed 200 meters, which is significantly less than the distance of an aimed shot from the majority of rifles, and even less than the use range of sniper rifles.

More than anything, game cameras are reminiscent of the image that action cameras such as the GoPro provide. If you want, you can easily find various videos shot from such cameras on YouTube, and take a look at how small the people look at just a few meters:

ABC News (Australia)

FREEDOM]

Antovit22

Naturally, at such a viewing angle, an aircraft at four kilometers on a full HD monitor is about four pixels wide and only half a pixel tall – in other words, it disappears from view. And even from two kilometers, the aircraft has a wing thickness of significantly less than a pixel, while the fuselage is around one pixel. This means that with an ordinary game camera, an aircraft will start to flicker and disappear at a distance of two kilometers and will fully disappear by four kilometers. When MSAA 4X technology is used, an aircraft is theoretically visible at up to 2x km (although these are very small values too).

Apart from that, the human eye perfectly recognizes speed, i.e. object movement. We’re better at this than at simply recognizing outlines. But on a full HD monitor, the most noticeable movement from an aircraft at such a distance is so-called aliasing – pixels appearing and disappearing (which MSAA fights against). On the whole, as rendering technology advances, the earth becomes more full of detail and it’s harder for us to recognize an object which is made up of only a few pixels on a contrasting and detail-rich background.

As you probably realize now, the task of showing things in the game “like in real life” is extremely difficult. This is because technically realizing the quality of an object as perceived by the sharp human eye is impossible by more than a factor of two without sacrificing the angle of view (“zooming in” in the game does exactly that – turns the game camera’s viewing angle into one that roughly corresponds to the real resolution of the human eye). For this reason, most games use certain tricks for in-game objects.

Implementation in War Thunder

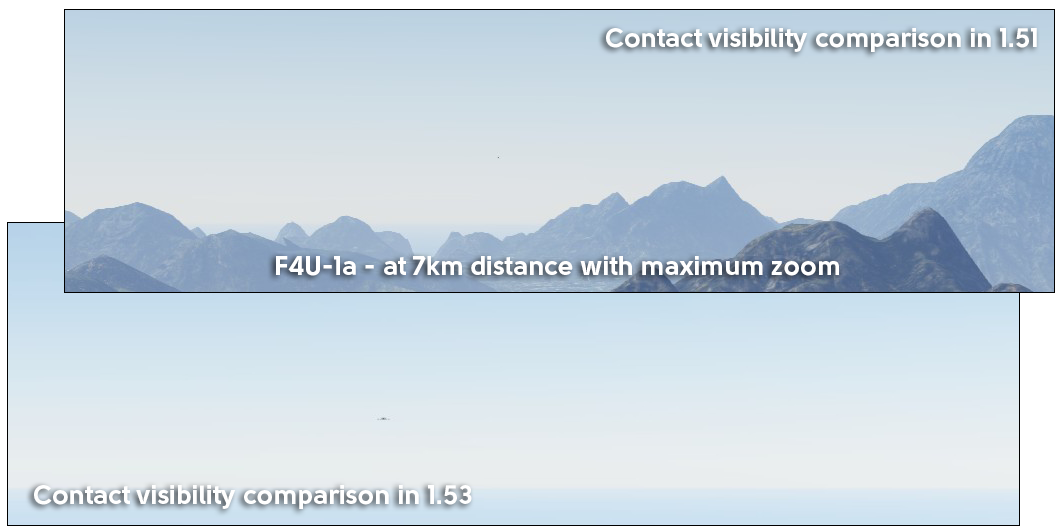

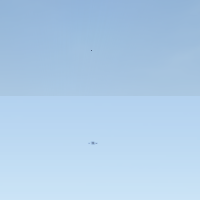

In War Thunder, up until version 1.53, one of the main such tricks for aircraft was the so-called “contacts” (or “flies”) – a special object which represented a visual contact. Contacts are drawn from the distance where the aircraft begins to disappear and shrinks to less than a pixel, a subpixel (the specific distance depends on the size of the plane, the viewing angle of the camera and the monitor resolution) and before the moment of “contact loss” (this depends on various factors, it is usually around 8-12 km).

In most game modes, markers are also drawn at certain distances – these are special interface elements that supplement the technical imperfections of monitors for players – i.e., an actually recognized and detected contact is shown as a bright object. In the modern warfare of real life, such “friend/foe” markers are an important element of a pilot’s (or tanker’s) high-tech helmet. And these helmets sometimes solve the same problem as in the game – they don’t just make life easier for the tanker/pilot, they also compensate for a deficit in the resolution of external cameras and the display of the helmet itself.

To make the markers act as closely as possible not to those in modern warfare, but to the idea of “this is how the pilot perceives information in reality”, the markers are displayed depending on visibility conditions, lighting, fog, clouds, whether objects are directly on the background of the sun, their relative high above the horizon (seeing a plane on the background of land is a lot harder in real life too) and much more, including the pilot’s characteristics. In 1.53, all these mechanics were updated, refined and made clearer for the player, but that’s a topic for another article (or the 1.53 changelog).

In our Simulator Battles, which are designed to reflect the battles of the Second World War with maximum authenticity, there are no markers. This is to avoid ruining the lifelike image with extraneous elements. Alongside this, vehicles are much harder to see than in real life – to be precise, it looks like if you were watching a real-life conflict through an action camera fixed to the pilot’s head. Though in fact it’s a little better thanks to a lot of work done on making the game more lifelike than a digital video camera. To be precise, “contacts” appear at distances where the aircraft is definitely no longer visible. But before this, there are moments when the plane is very hard to see – it’s just a few pixels, after all! – but is not yet covered by a “contact”/“fly”, because this covers up the orientation and maneuvers of the aircraft. At these (“medium”) distances, aircrafts were very easy to lose from view without markers.

In update 1.53, a special detalization level has been created for aircraft at medium distances. In practice, the game draws the plane at another viewing angle and with a higher resolution (super sampling anti-aliasing) so that it doesn’t disappear entirely from view. Accordingly, at medium distances a plane might seem small, but will remain noticeable enough now, and without losing its shape or outline. Along with the new rendering (link), which makes maps more realistic in terms of lighting and diffusion (and accordingly less contrasting at long distances), this significantly improves the ability to track and detect a target in an aerial battle, making battles more comfortable. We hope that fans of flying will appreciate this innovative concept.

At the same time, the image dynamic range was increased 8-16 times, and this means that if earlier at shorter ranges (up to a kilometer) a glimmer on a plane’s wing could practically “disappear” before by blending in with a neighboring non-glimmering surface, then now you can more precisely detect an enemy aircraft’s orientation and the maneuvers they’re performing.

Part II. Visibility on the Ground

If the main "obstacles" to visibility in the air are great distances and limited screen resolution at an extremely preferable high viewing angle (as enemies can be anywhere in the three-dimensional world of aerial conflict), then the main obstacle to visibility on the ground are “natural” barriers – houses, grass, trees and bushes. Grass inhibits vision as a rule only in sniper mode, because in standard mode the vision point will be relatively high above the ground vehicle, and even tall grass on hills very rarely covers the outlines of enemies. In sniper mode, however, it sometimes happens that grass on a rise on a hill turns out to be right on the line between your sight and the enemy. In real life, this problem obviously does happen in tanks, but relatively rarely as the high and solid grass is quite a rare climatic feature on battlefields. Further the vehicle's crew and infantry support would remove this issue. In connection with this, War Thunder has an in-game setting – “show grass in sniper mode” – which makes the game easier to play and removes this difference in the game mechanics for players at different settings.

Visibility for large objects – houses, rocks, hills – is calculated on the server side. The game’s client simply receives no information about ground vehicles that are completely hidden by large objects or are far enough away. In 1.53, the entire system of detection and recognition (transmitting markers) has been fully updated. In 1.51 and earlier, the player only received information about ground vehicles which he could see directly, plus in a certain noise radius around his ground vehicle (enemy ground vehicles that were shooting had increased radii). At the same time, recognition (the appearance of a marker) was defined by how well the enemy vehicle was hidden. The crew characteristics (long-distance vision) influenced the radii, but as a rule, in real battles the visibility of a marker was defined by how well it was covered by objects. The action of the crew was practically not taken into account at all (at greater ranges, targets appeared which your allies selected using the target select button).

In “Fire Storm”, all this has fundamentally changed. Ground vehicles now look “ahead” in a relatively narrow sector based on the direction the vehicle is facing, and “around” based on the direction of the camera in a wide sector. A starter crew now "detects" (i.e. the server gives it information about) a hiding ground vehicle (i.e. not moving and not shooting) at a distance of 500-750 meters (all this numbers here and below are preliminary and may change). A fully trained crew will detect at a range 2-3 times that. At the same time, a ground vehicle that is moving or shooting (even from machine guns) immediately de-masks itself (becomes visible from a great distance), which corresponds to how the vision of humans (our tankers) actually works in real life. In the future, the game will most likely start to take camouflage and shape of vehicle into account when calculating vision – for example, the colour of the camouflage and the size of the ground vehicle.

Your tanker crew will now also start to automatically receive and transmit messages about the enemy’s location. The distance at which your crew detects a target using radio transmissions from allies is defined by the "radio operation" skill. Messages are sent to other crews if the ground vehicle is under enemy fire, has hit an enemy vehicle, or aims at the target. In Realistic Mode, where there are no markers above enemies, a marker will be placed as a sign to attract attention on the map itself, meaning “I saw an enemy here”. We hope that this will increase the potential range of tactical actions – ambushing or stalking enemies from behind should be more effective now – the enemy won’t notice you until you open fire. At the same time, well-coordinated team actions can significantly improve your gameplay.

Apart from the large objects and grass on War Thunder’s open maps, bushes and trees also affect visibility and detection. Some War Thunder players play in Ultra Low Quality Mode (a compatibility mode for legacy video cards) not because they have weak computers, but because this gives them a certain advantage in battle – bushes around your vehicle don’t obscure your aiming view and the closer maximum distance for tree drawing can influence how well an object is covered by trees. And although these players were relatively few, the presence of such an advantage spoiled the game somewhat for those who wanted to get as much enjoyment as possible out of their powerful gaming systems. Alas, this was the price for an extremely simplified, but high-performance render for old video cards.

The maximum drawing distance for trees has a far lesser effect in the new update, as the detection distance is now more closely related to crew skills and activity. But nonetheless, in 1.53, thanks to the improved abilities of Dagor Engine 4.0, both bushes and trees are now drawn when seen from the ground vehicle at the same distances in all regimes and all settings (up to and including High settings). At the same time, fog thickness remains slightly higher in Ultra Low Quality.

The increased tree visibility distance can still have a small effect on performance in certain configurations, but the effect should not be significant in most cases except for a general improvement in quality and detalization thanks to optimizations performed on Ultra Low Quality mode.

We hope that you can check these changes out for yourselves and enjoy the work we’ve done in 1.53 “Fire Storm” very soon.